Scroll through any platform today and you’ll see it instantly content everywhere. Short clips, fast edits, bold captions. The digital world is loud, and speed often wins over depth. But for creators who care about storytelling real storytelling the challenge isn’t posting more. It’s building something that feels cohesive, believable, and visually consistent from start to finish. And that’s where things usually get complicated.

Traditional video production has always carried friction. Good cameras are expensive. Lighting setups take space. Editors and sound designers bring skill and invoices. For independent creators or small brands, this can turn a creative idea into a logistical headache.

Seedance 2.0 approaches that problem differently. Instead of asking creators to obsess over every frame, it shifts the focus toward intention. What’s the story? What’s the mood? Where is the camera? The system handles the heavy mechanics physics, lighting, sound so the creator can think more like a director than a technician.

The Real Problem: When Continuity Breaks the Illusion

If you’ve ever experimented with early generative video tools, you probably noticed something unsettling. A character’s face subtly changes between shots. Clothing textures morph. Background elements flicker. It’s small, but once you see it, you can’t unsee it. And the audience definitely notices.

When visual continuity breaks, immersion collapses. The brain is wired to detect inconsistency. If a character looks different in every cut, the story stops feeling real even if the concept is strong.

Many creators end up spending hours in post-production trying to fix issues that shouldn’t exist in the first place. Patching glitches. Masking artifacts. Rebuilding scenes. It defeats the purpose of “automation.” What’s changed recently is how models approach time itself.

Solving Temporal Instability in Generative Video

The core difficulty in AI filmmaking has always been temporal stability making sure the world remains stable as the video progresses.

Earlier systems essentially guessed the next frame. Then guessed again. And again. That’s why movement often felt jittery or slightly warped, like the scene was breathing in an unnatural way.

The newer architectural shift treats video as a continuous 3D volume rather than a stack of disconnected 2D images. That difference sounds technical, but the effect is simple: motion starts to feel grounded.

When someone walks, their body carries momentum. When light hits an object, it behaves consistently across frames. Shadows don’t flicker unpredictably. The environment feels anchored. It’s subtle but it’s the difference between “AI-generated clip” and “cinematic footage.”

Why Spatial and Temporal Modeling Matter

Under the hood, one of the quiet breakthroughs is the separation of spatial and temporal attention. Spatial modeling focuses on what’s inside a frame textures, lighting, skin detail, fabric patterns. It’s about fidelity.

Temporal modeling governs how those details evolve over time how a character turns their head, how clothing folds during movement, how perspective shifts as the camera tracks.

By combining both within a Diffusion Transformer architecture, the system reduces the infamous “morphing” effect. You know the one when faces subtly reshape mid-motion or objects blend into each other for a split second.

The result? Characters maintain identity. Movements feel intentional. The illusion holds. And once continuity holds, storytelling becomes possible.

The Quiet Revolution: Native Audio Generation

Visual realism is only half the equation. Silent AI videos have always felt incomplete. Even beautifully rendered clips lose depth without sound.

Traditionally, adding audio meant hunting through sound libraries, dragging clips onto a timeline, trimming, syncing, adjusting levels and repeating. Now, multimodal generation changes that dynamic.

Instead of building sound afterward, the system generates it alongside the visuals. If the scene describes a busy city street, you don’t just see movement you hear distant traffic, subtle footsteps, ambient crowd noise. And importantly, these sounds are time-aware.

If a door closes in the scene, the audio matches the exact moment of impact. Not approximately. Precisely. That synchronization removes one of the most tedious phases of video production. It also adds immersion that silent clips simply can’t replicate.

Where 2026 Standards are Heading

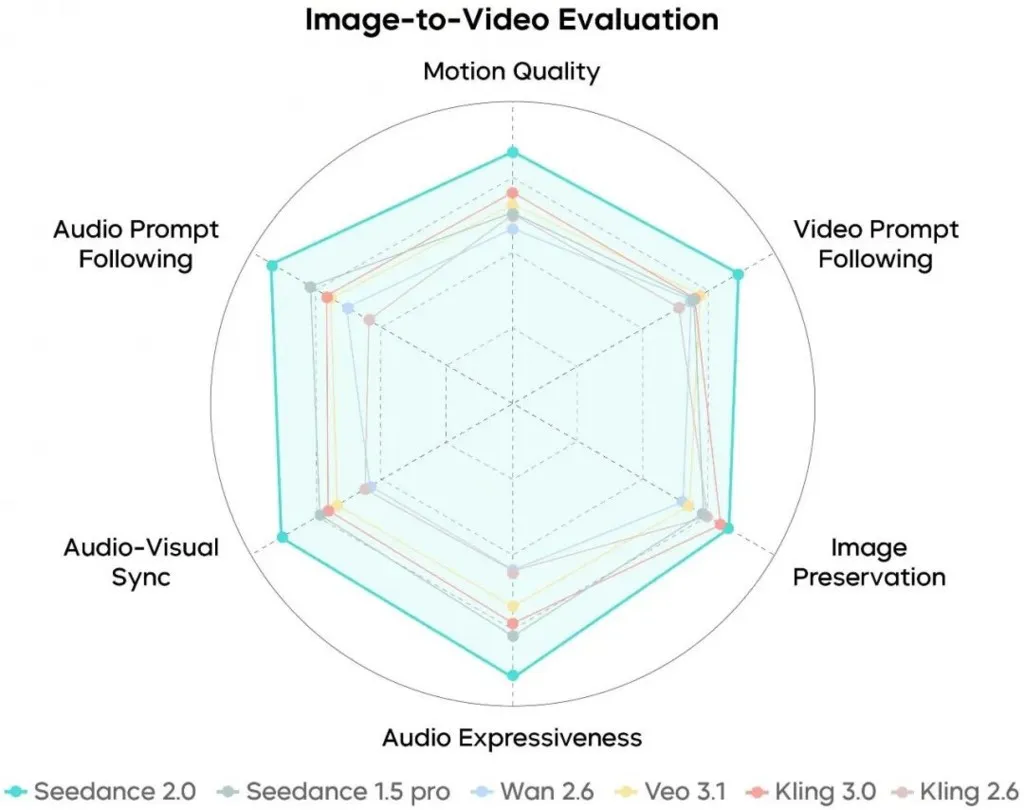

To understand the leap, it helps compare typical baseline AI video output with Seedance 2.0’s current standards:

- Resolution: Many baseline systems still cap out around 720p with noticeable noise. Seedance outputs full 1080p in high definition.

- Character Consistency: Older models struggle to preserve identity across shots. Here, cross-shot consistency is significantly more stable.

- Motion Realism: Instead of linear or jitter-heavy motion, movement follows spatial-temporal physics.

- Audio Workflow: Manual synchronization versus native environmental sound synthesis.

- Narrative Length: From isolated 5–10 second clips to structured sequences up to 60 seconds.

- User Input Logic: Less keyword guessing, more director-level intent processing.

That extension to 60 seconds may sound modest, but narratively, it changes everything. You can build an arc. Introducing a character. Create tension. Deliver payoff.

For product storytelling, cinematic shorts, or immersive brand pieces, that duration unlocks structure.

A Workflow That Feels Surprisingly Practical

One of the more refreshing aspects is how streamlined the production process feels. It breaks down into four intuitive stages.

1. Describe the Vision

This is the creative phase. You outline characters, environment, mood, and camera movement. The more clearly you describe lighting and tone, the more accurate the interpretation becomes.

It feels less like coding and more like briefing a cinematographer.

2. Configure the Parameters

Here you define the technical structure aspect ratio (16:9, 9:16, 1:1), resolution, and duration. Whether you’re producing for widescreen YouTube or vertical mobile formats, the setup adjusts accordingly.

3. Automated Processing

The system generates a 480p preview first. This is important. It allows quick evaluation without committing computational resources to a full render.

Once approved, it upscales to 1080p and generates synchronized audio simultaneously.

That preview-first logic encourages iteration rather than guesswork.

4. Final Review and Export

The finished asset exports as a clean MP4, ready for distribution or further refinement in a professional suite if desired. No watermark. No convoluted pipeline. Just output.

The Reality Check: It’s Not Magic

For all the progress, generative video isn’t flawless. Complex physical interactions, two people shaking hands, intricate mechanical motion, overlapping choreography can still produce inconsistencies. The output quality remains tied to the clarity of the input prompt.

It’s not a one-click masterpiece machine. The most effective workflow is iterative. Generate. Evaluate. Refine the description. Adjust tone or camera cues. Generate again.

That loop is faster than traditional reshoots but it’s still a creative process. And maybe that’s a good thing.

The Bigger Shift: Democratizing Cinematic Production

What’s really happening here isn’t just better rendering. It’s access.

For years, cinematic production value was limited by budget, equipment, and team size. Now, much of the heavy lifting physics simulation, lighting accuracy, environmental sound is automated. That frees creators to experiment.

To prototype ideas rapidly. To test visual narratives before investing heavily. To move from concept to high-quality output in hours rather than weeks.

As we move deeper into 2026, high-fidelity, coherent, synchronized video won’t be a luxury. It will be the baseline expectation. And the tools that make that possible are shifting the role of the creator from technician to storyteller again.

In the end, technology fades into the background. What remains is narrative continuity, believable motion, immersive sound, and a visual world that doesn’t break under scrutiny.

When those elements align, the only real limit left is imagination.

Disclaimer: This post was provided by a guest contributor. Coherent Market Insights does not endorse any products or services mentioned unless explicitly stated.