Engineering teams don't miss deadlines because the analysis is hard. They miss deadlines because everything around the analysis (tagging elements, managing load combinations, running code checks by hand, producing reports nobody reads until the client asks for revisions) eats time that should go toward actual engineering. McKinsey's research puts it plainly: global construction inefficiencies cost USD 1.6 trillion annually, with budget overruns ranging from 20% to 45%. Structural verification, the final stage where designs get checked against codes, is one of the worst bottlenecks.

The structural analysis software market is responding. It crossed USD 7.8 billion in 2025 and is growing at over 12% annually, driven by platforms that don't just solve equations but automate the entire post-processing workflow. Some teams report up to 80% time savings on verification tasks. Not because the math changed, but because the manual labor around it disappeared.

Where Engineering Hours Actually Go

Ask a project manager where the schedule slipped, and the answer is rarely “the solver ran too long.” It's the work before and after the solver that bleeds hours.

Engineers who work with FEA models spend 50–60% of their time on pre-processing. Geometry cleanup, meshing, boundary conditions. Not interpreting results or making design decisions. Post-processing is no better. Extracting results, formatting spreadsheet checks, writing verification reports: all manual, all repetitive, all vulnerable to human error.

Five tasks consume a disproportionate share of engineering time on any structural project.

- Start with element identification and tagging. On a typical offshore model, manually identifying beams, plates, welds, and stiffened panels can take days. Every time the mesh changes, the tagging needs updating. Automatic recognition tools (weld finders, panel finders, beam member finders) cut this from days to minutes.

- Then code compliance checking. A single beam-column against Eurocode 3 means running cross-section classification, flexural buckling, lateral-torsional buckling, and combined interaction checks — each governed by different clauses depending on the member's loading and restraint conditions. Multiply that by hundreds of members and dozens of load combinations. By hand, it's weeks. Automated, it's hours.

- Load combination management is just as bad. Real projects don't have ten load cases. They have hundreds. An FPSO module might carry 300+ combinations for ULS, blast, heel, and fatigue. Sorting through them manually to find governing loads burns senior engineers on tasks a junior shouldn't be trusted with either.

- Report generation, too. Verification reports (model descriptions, check summaries, utilization plots, code references) routinely take 20–30% of total project time. When the model changes, the report needs rewriting from scratch.

- And post-change rework. A client requests a design change on Thursday. The model updates, but every downstream check, every report section, every exported table is now stale. Without automation, the team spends Friday and Monday redoing work that was already done.

Recognition Tools: The Efficiency Multiplier Nobody Talks About

Most discussions about structural analysis software focus on the solver. Fair enough. Accuracy matters. But the biggest productivity gains come from what happens after the solver finishes.

Take element recognition. SDC Verifier's — https://sdcverifier.com/software/sdc-verifier/ — Panel Finder scans a shell model and identifies plates, stiffeners, and their dimensions: length, width, thickness, orientation. All without manual input. The Weld Finder detects connection nodes and transforms stresses into weld-local coordinate systems. The Joint Finder recognizes connection types and bracing points in beam models, providing the boundary condition data needed to calculate effective buckling lengths.

On a crane structure with 4,000 elements or an offshore jacket with 10,000, doing this by hand takes days. Automated, it takes minutes. And it's consistent. No variation between engineers, no forgotten stiffeners, no misclassified welds.

Once elements are recognized, code checks run across every element under every load combination. Not just the governing members an engineer would pick by intuition, but all of them. The governing loads tool extracts critical results from hundreds of combinations automatically, so engineers focus on what actually matters instead of hunting through data. That shift, from reviewing everything to reviewing only what failed, is where most of the time savings actually come from.

Reporting: From Bottleneck to Background Task

Anyone who's assembled a 200-page verification report by hand knows the pain. Screenshots pasted into Word. Tables copied from Excel. Cross-references that break every time a section moves. And when the model updates, well, that's another late evening.

“With SDC Verifier, we streamlined tedious tasks like code checks and report preparation, saving over 70% on report generation. Automated workflows reduced project time by 20–30%, even with 8 complex load cases and over 650 000 elements.” — Mihir Jha, Director, Cosimtec Pte Ltd

Automated reporting tools change the calculus entirely. Reports generate in Word, PowerPoint, or PDF format directly from the model, complete with descriptions, results tables, and visual plots. Change the model, rerun the checks, and the report regenerates automatically. No copy-paste, no reformatting, no mismatched revision numbers.

Allseas demonstrated this at scale: 22 FEM models, 22 load conditions, over 4 000 pages of code-check reports. Generated in two days. The same scope, done manually, would have taken weeks.

|

Task |

Traditional Workflow |

Automated Workflow |

|

Element identification |

2–5 days (manual tagging) |

Minutes (automatic recognition) |

|

Code compliance checks |

1–3 weeks per model |

Hours, even for 650 000+ elements |

|

Load combination review |

Days of spreadsheet filtering |

Automatic governing load extraction |

|

Report generation |

1–2 weeks per report cycle |

One-click, auto-regenerated after changes |

|

Post-change rework |

Near-full repetition of checks and reports |

Incremental: rerun and regenerate |

|

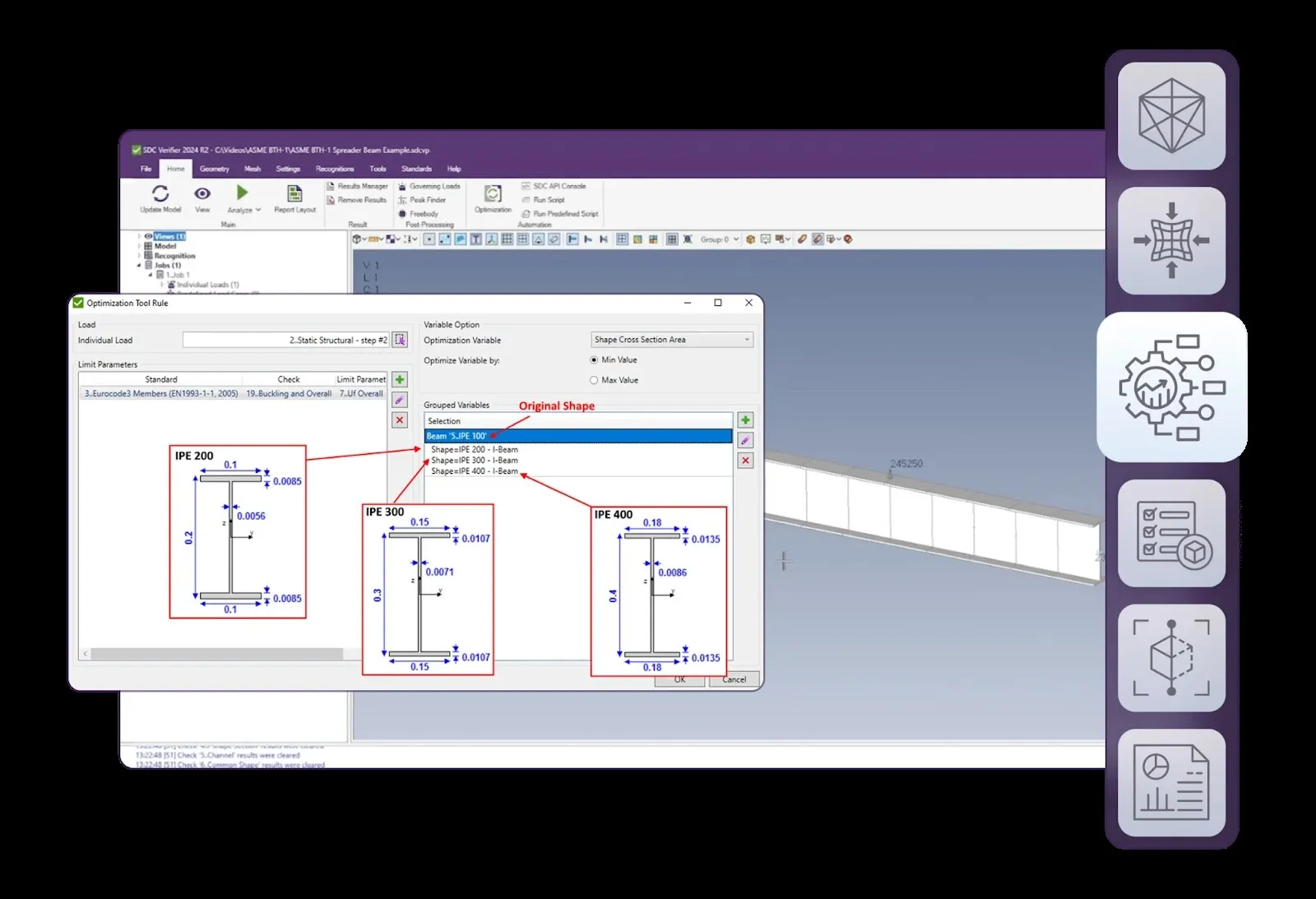

Design optimization |

Iterative manual trial-and-error |

Parametric optimization module |

Optimization: Passing the Code Check Isn't the Finish Line

Passing all code checks is necessary. But it's not sufficient if the design carries 15% more steel than it needs. Excess weight means excess material cost, excess fabrication time, and in offshore or lifting applications, excess load on supporting structures.

It adds up.

Optimization modules address this directly. Rather than manually adjusting plate thicknesses and cross-sections until the model barely passes, the software evaluates combinations of weld types, plate gauges and section profiles to find the lightest configuration that satisfies all applicable standards. RABLE, a Dutch solar engineering firm, used this approach to cut frame weight by up to 50% while maintaining full compliance across six configurations.

On a project where steel costs USD 2 000–3 000 per ton fabricated and installed, shaving 15% off structural weight pays for the software many times over. We've seen project teams recover the full license cost on a single optimization run.

Quantifying the Return

Engineering managers struggle to quantify productivity improvements because the baseline is poorly defined. How long should a verification cycle take?

Three metrics work well for tracking ROI:

- Start with verification cycle time. Measure how long it takes from “solver finished” to “verification report delivered.” Compare before and after automation. Teams using automated workflows consistently report 60–80% reductions here. IGN Ingenieurgemeinschaft Nord documented 50% faster strength verification compared to manual calculations on offshore energy projects.

- Track rework hours after model changes. In a manual workflow, any design update triggers a cascade of re-checks and report edits. With automation, the software handles the cascade, not the engineer. Truth be told, this metric alone often justifies the investment.

- Measure code coverage. Manual workflows almost always involve selective checking, where engineers pick the members they expect to govern. Automated tools check everything. The member that actually governs isn't always the one the engineer expected. And finding that out during classification review rather than during design, well, that's not a conversation anyone enjoys.

The Deloitte 2026 Engineering and Construction Outlook projects a need for 499 000 new construction workers by 2026, with a potential $124 billion loss in output from unfilled positions. In that environment, getting more engineering output from the same team isn't a nice-to-have. It's how projects stay on schedule.

Disclaimer: This post was provided by a guest contributor. Coherent Market Insights does not endorse any products or services mentioned unless explicitly stated.